0 saved

0 saved

36.9K views

36.9K views

You, your team, your organisation are all going to fail at something, sometime. The question is, what will you do next?

Matthew Syed’s Black Box Thinking aims to address our tendency to airbrush over failure by implementing open-loop systems and mindsets to collect, examine and apply learnings from failure.

LESSONS FROM AVIATION.

Syed uses the metaphor of black boxes from the aviation industry that capture crucial data in a disaster that can explain what happened and inform future practice. Syed makes the point: “Everything we know in aviation, every rule in the rule book, every procedure we have, we know because someone somewhere died . . . We have purchased at great cost, lessons literally bought with blood.”

CLOSED vs OPEN SYSTEMS.

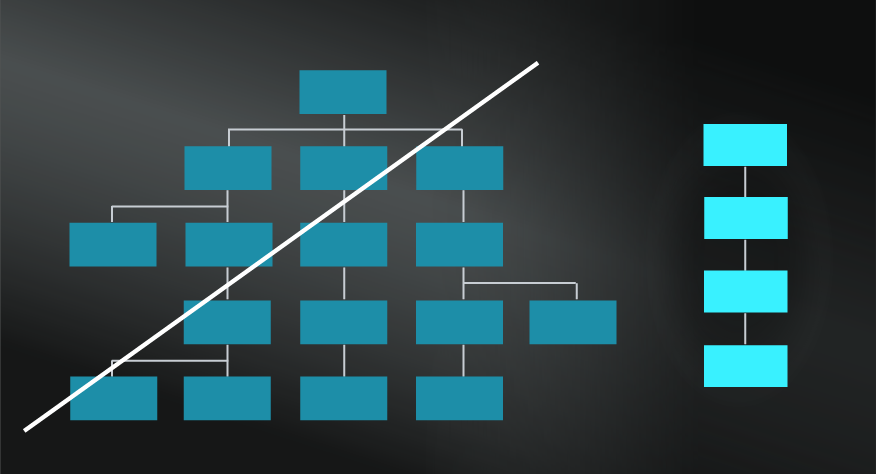

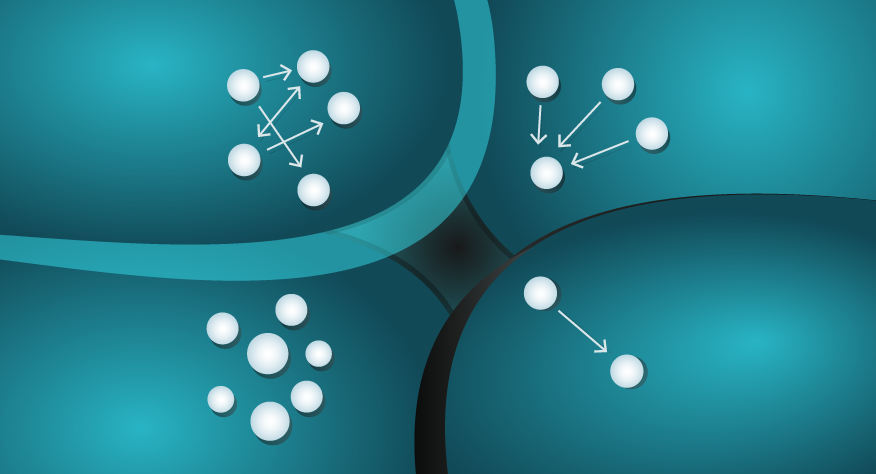

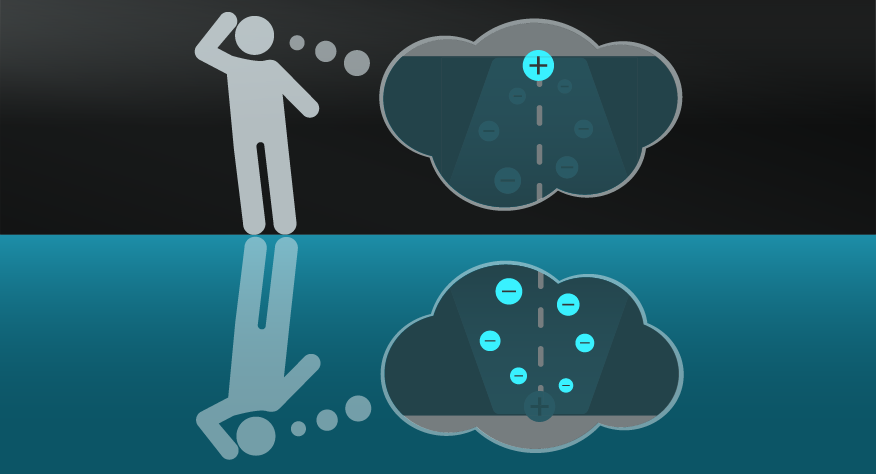

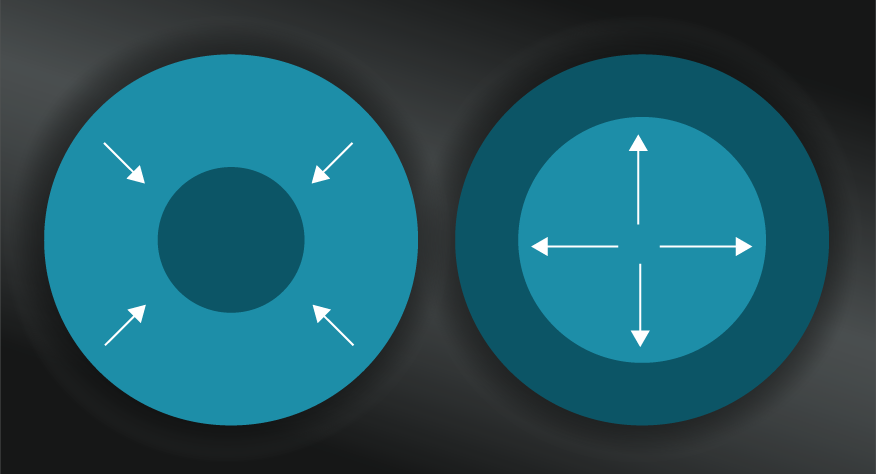

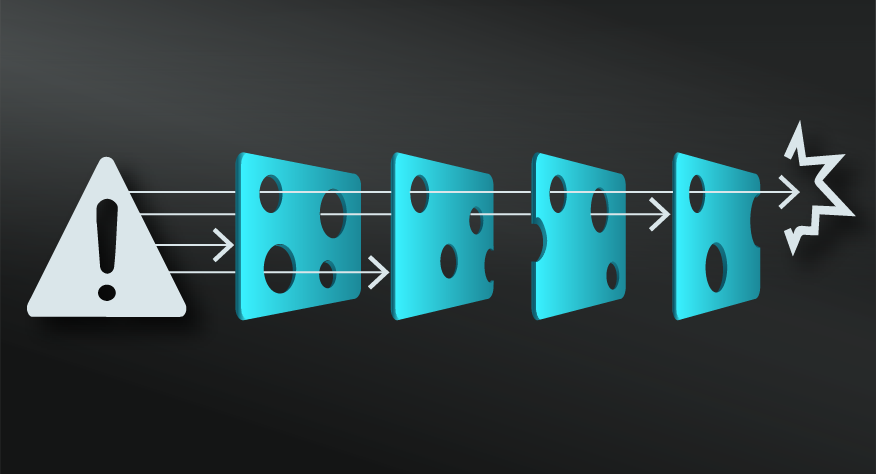

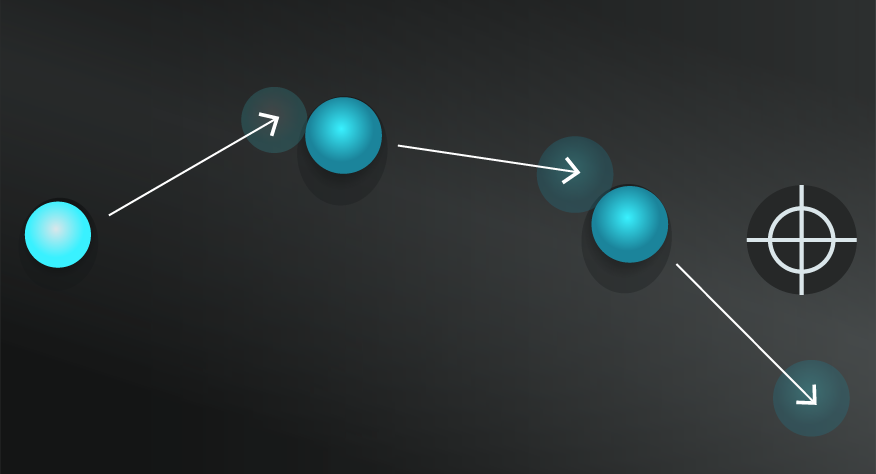

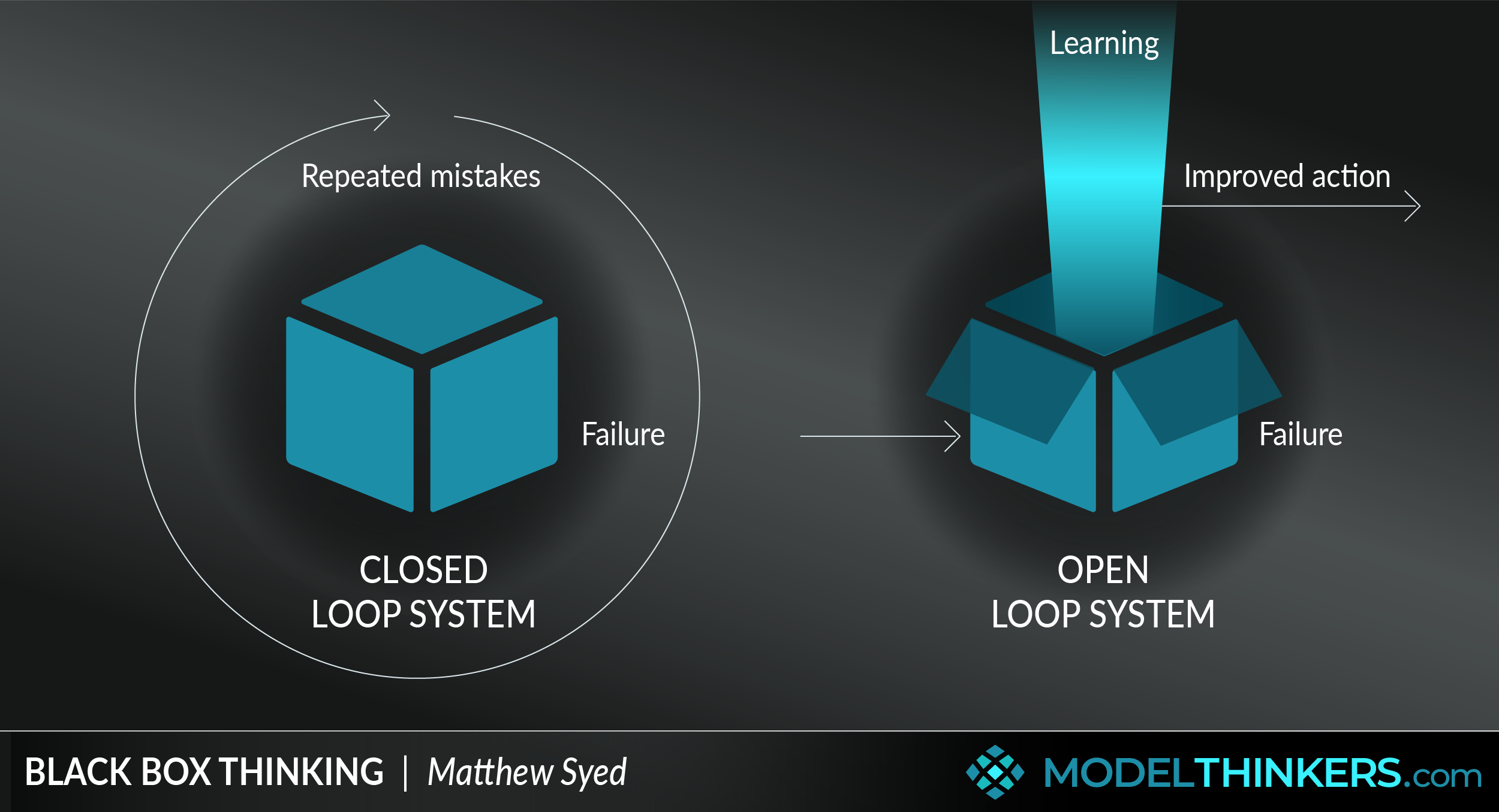

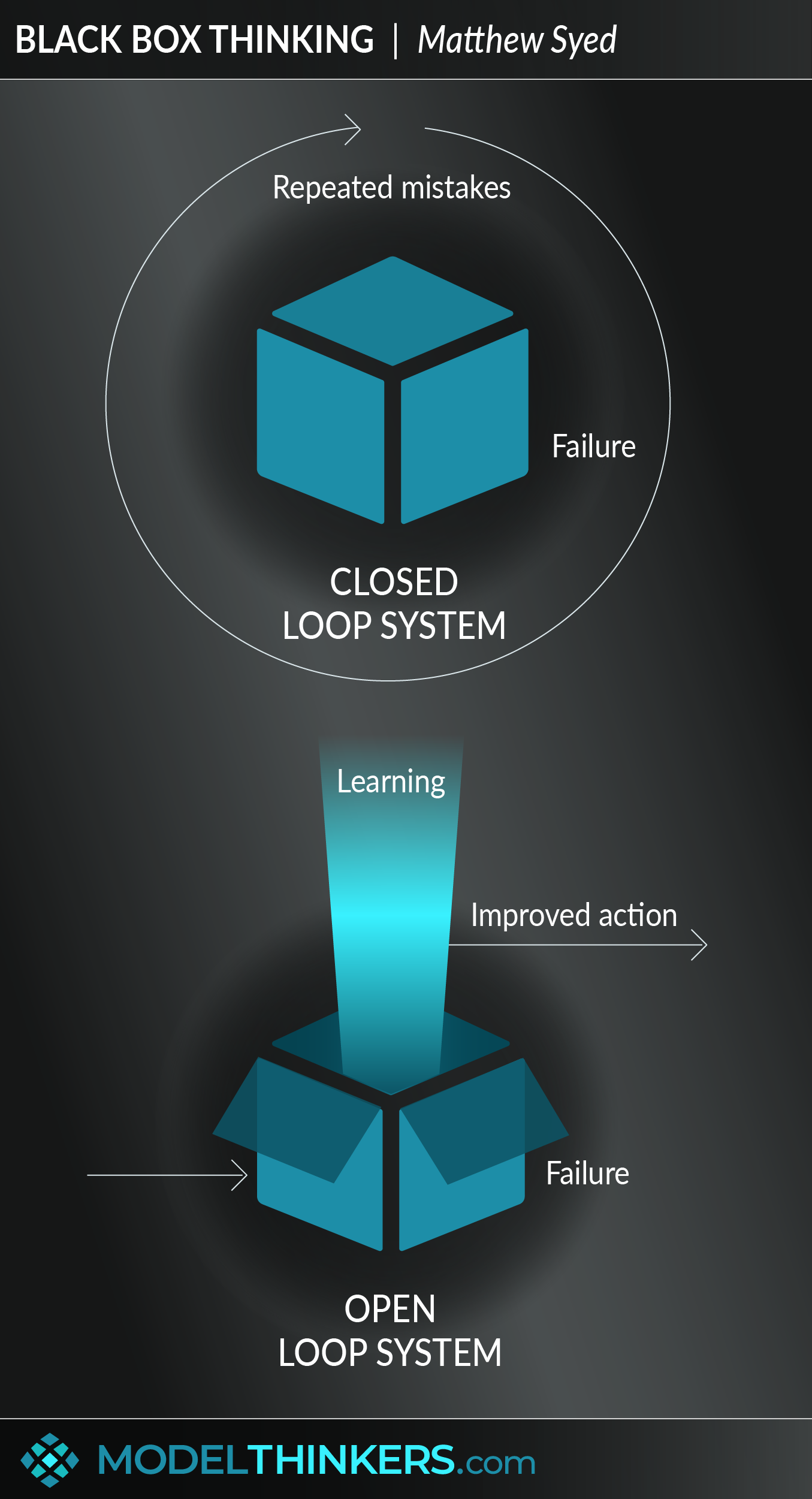

Syed goes on to describe typical closed-loop systems that cover up failure, ignore errors and do not progress. This is particularly informed by cognitive dissonance, blame and culture within an organisation.

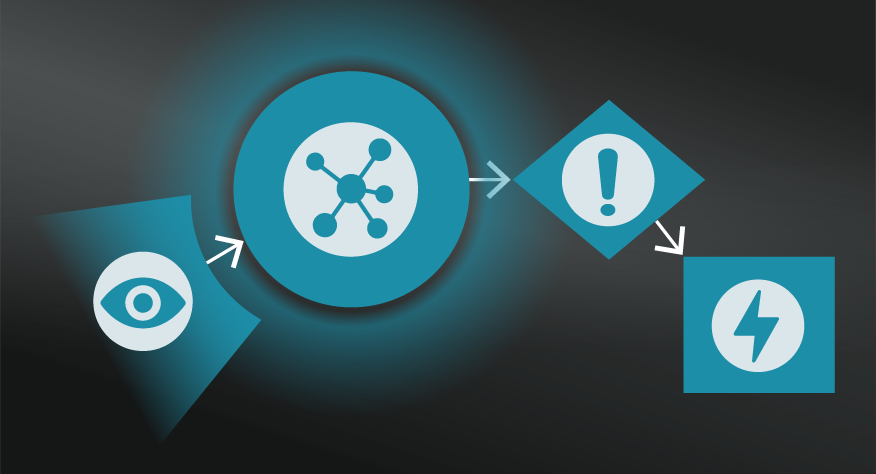

This contrasts with Black Box Thinking which involves open-loop systems that collect data from failures, identify patterns and insights, and provide opportunities for learning and improvement.

IN YOUR LATTICEWORK.

Black Box Thinking helps to reframe failure and help to design systems that enable continuous improvement — in that sense, it is aligned to Double Loop Learning as a process of continuous learning and Agile Methodology as an iterative approach of improvement. Similar to those models, it also owes much to the Scientific Method.

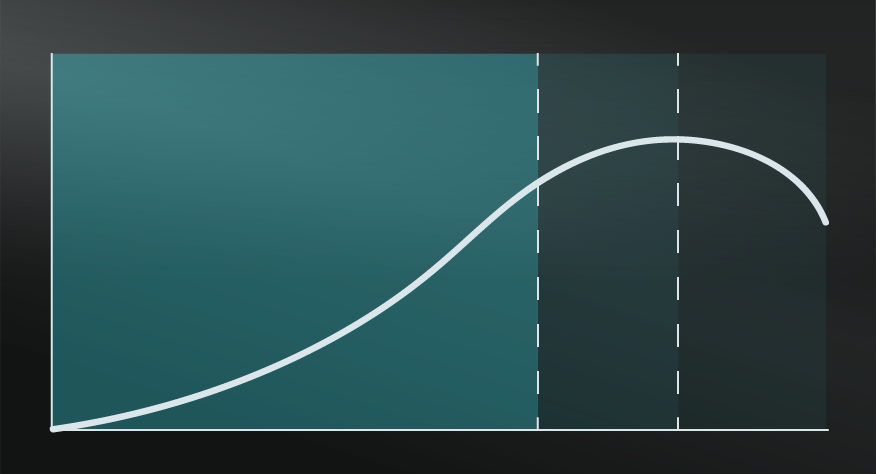

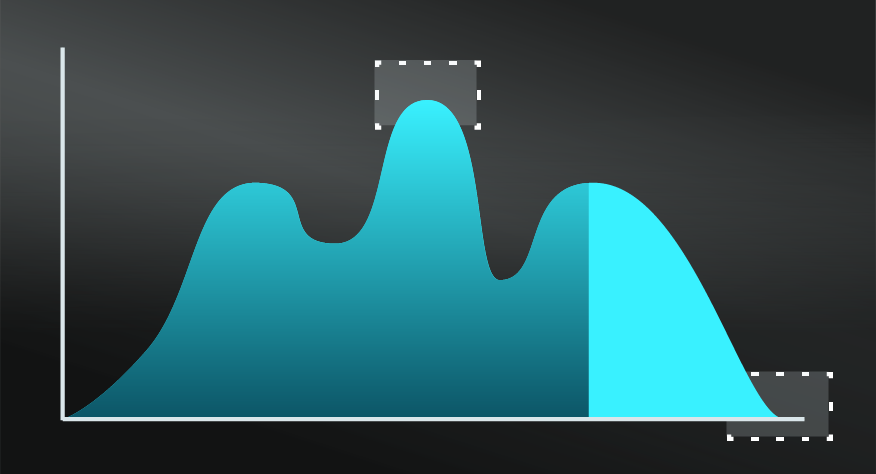

It aims to overcome and challenge the bias from the Confirmation Heuristic with data and transparency and can be applied to leverage Compounding, for consistent improvements.

- Exploit your failures

While most people tend to blame others or circumstances when something goes wrong, a powerful alternative is to have a progressive and open attitude towards failure.

- Reduce blame.

Focus on describing events objectively and look to potential lessons from an organisational level rather than focusing on blaming individuals.

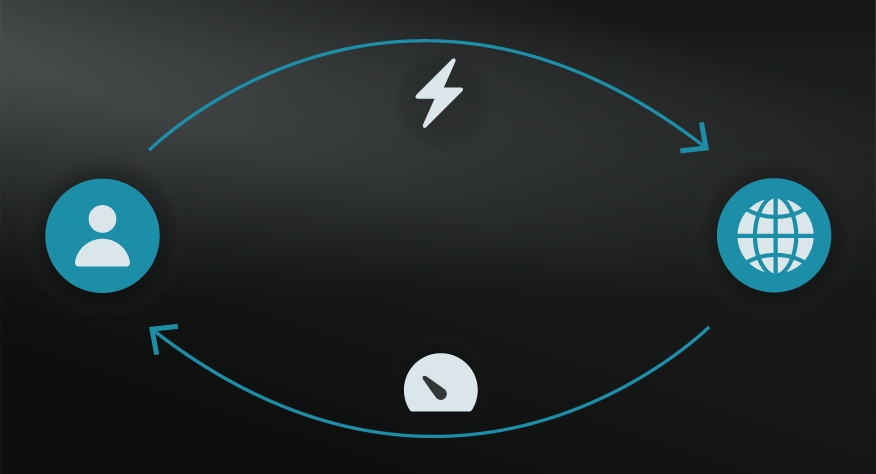

- Focus on open-loop systems.

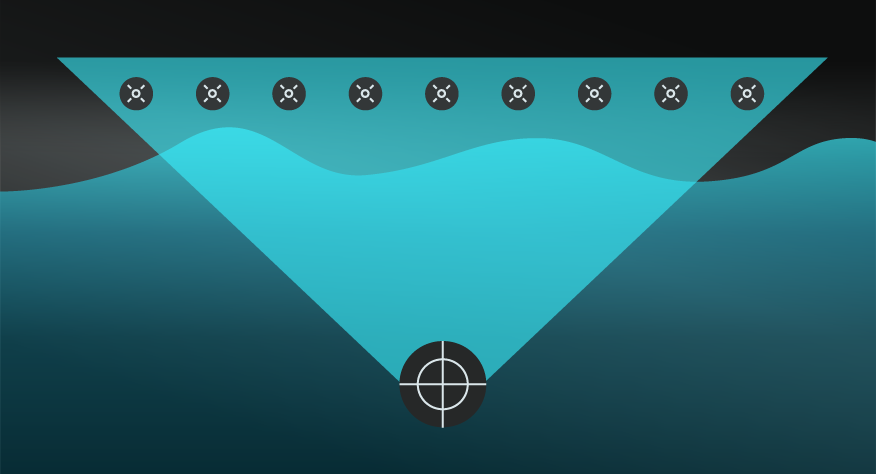

Design open-loop systems that uncover errors and failures in every project and initiative. Consider how your equivalent to an aviation ‘black box’ will track actions and decisions in order to continuously learn and improve. This includes testing assumptions, using feedback loops, and embracing minor errors as opportunities for marginal gains.

- Focus on mindset.

Enable a culture and mindset shift that supports perseverance, a growth mindset and a psychologically safe culture.

- Be aware of human limitations.

A common cause of mistakes is the lack of attention, which is a scarce resource. Aside from applying measures to increase diligence and motivation, make sure to create a system and culture that considers the limitations of human psychology.

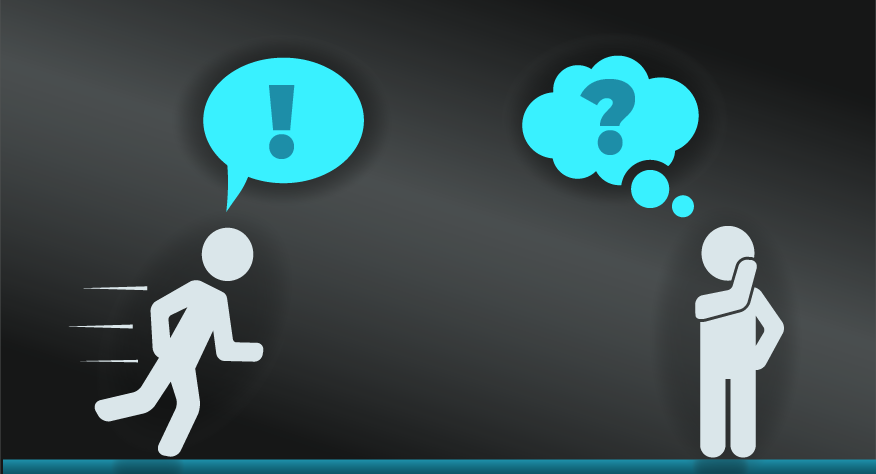

Black Box Thinking can be difficult to apply in everyday life because of cognitive dissonance. When we have deep beliefs on a topic, we are more likely to unconsciously reframe evidence than to alter our belief. When someone’s livelihood or ego is affected by the admittance of mistakes, they will avoid failure to win on the short term, neglecting long-term consequences. Shooting the messenger is another common mistake of those who are not willing to learn from failure.

Being aware of Black Box Thinking and other people’s inability to use this mental model can cause anxiety and lack of trust – for instance being aware of a large number of medical errors can prevent you from seeking medical assistance when needed

Doctors vs pilots.

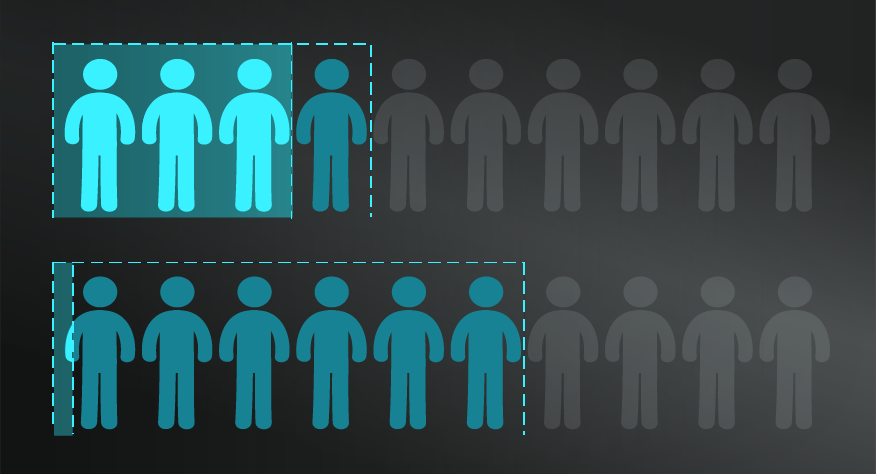

Syed targets health care and doctors in particular as a group who have a problematic history and culture when it comes to learning from failure. He calls out the medical profession as being fearful of blame and who therefore commonly describe errors or problems as ‘one offs’. In contrast to the black box approach of pilots, this means that each error remains untapped for lessons and improvements. At the same time it adds to a culture where this approach is accepted and expected.

Black box thinking is a mental model that helps to reframe failure and design systems that support continuous improvement.

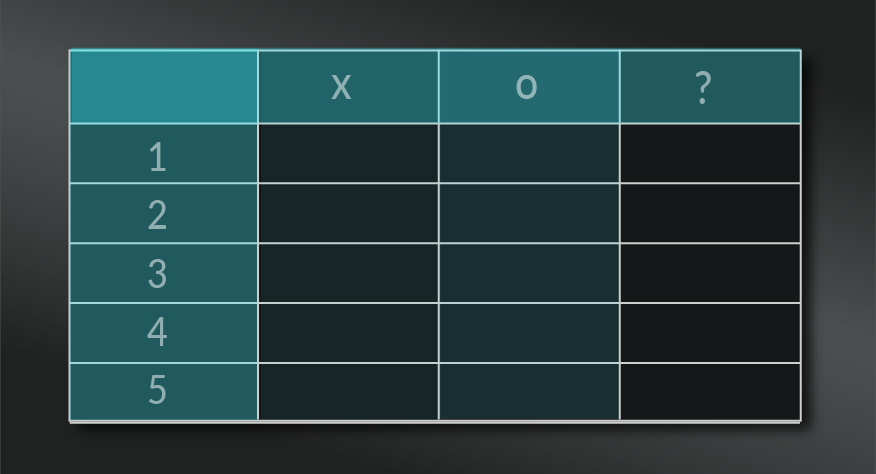

Use the following examples of connected and complementary models to weave black box thinking into your broader latticework of mental models. Alternatively, discover your own connections by exploring the category list above.

Connected models:

- Growth mindset: this is heavily referenced by Syed in his book and ongoing work.

- Cognitive dissonance and confirmation bias: as explanations of how we avoid seeing failure and errors.

- Psychological safety: and the lack of blame as crucial elements for high performance.

- Split and A/B testing: to support open systems that reveal opportunities for growth and learning.

- Compounding: in terms of the opportunity for marginal gains through analysing small errors.

- Fast and slow thinking: to consider how to slow down and analyse failure as part of open systems.

- Lean thinking: as a focus for continuous improvement.

Complementary models:

- Redundancy/ margin of safety: in considering how to minimise the impact of failure as well as learning from it.

- EAST framework (nudges): to support reporting and learning from failure.

- Mutually assured destruction: the tendencies for some cultures not to call out others failure lest they all suffer as a result.

The Black Box Thinking mental model was outlined by Matthew Syed in his bestselling book Black Box Thinking: Why Some People Never Learn from Their Mistakes - But Some Do. See Matthew Syed speaking about Black Box Thinking in a London Business school TEDx Talk. And finally, view Syed’s consulting services here.

My Notes

My Notes

Oops, That’s Members’ Only!

Fortunately, it only costs US$5/month to Join ModelThinkers and access everything so that you can rapidly discover, learn, and apply the world’s most powerful ideas.

ModelThinkers membership at a glance:

“Yeah, we hate pop ups too. But we wanted to let you know that, with ModelThinkers, we’re making it easier for you to adapt, innovate and create value. We hope you’ll join us and the growing community of ModelThinkers today.”