0 saved

0 saved

43.5K views

43.5K views

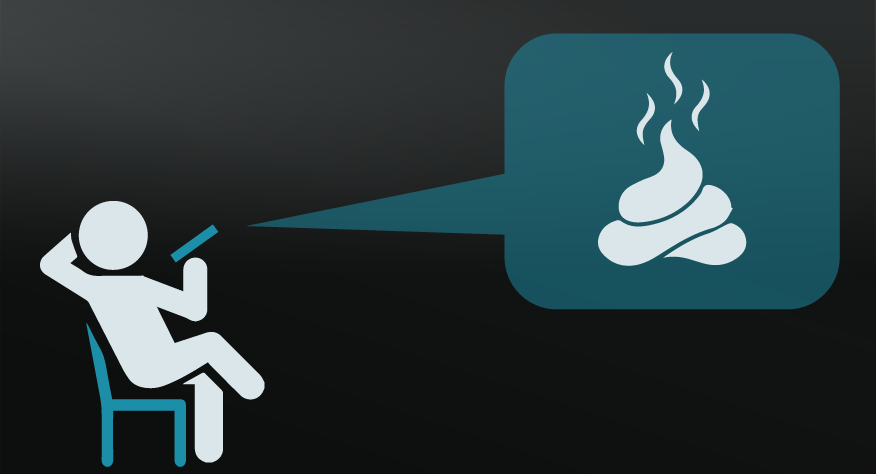

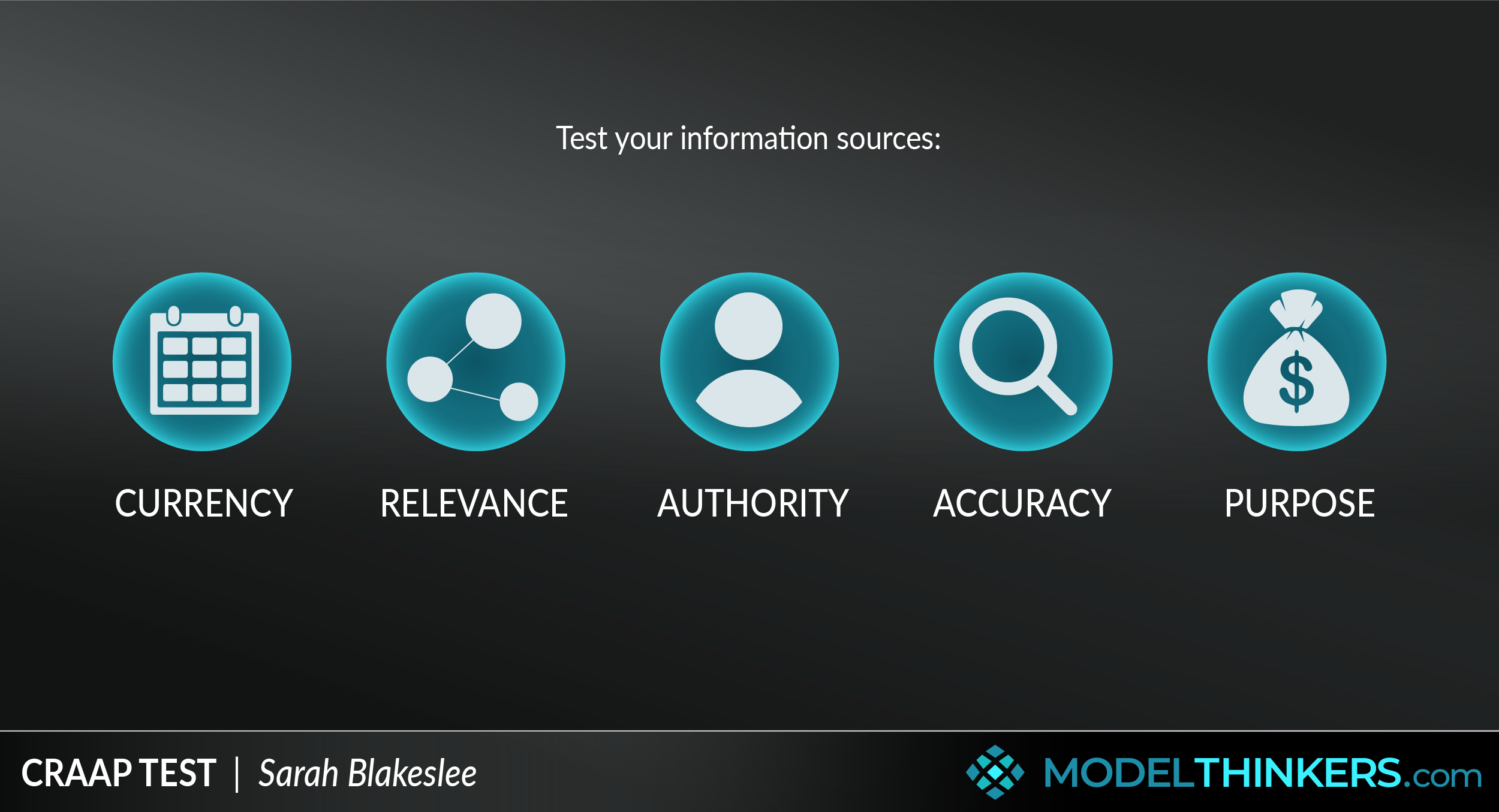

In these days of fake news and disinformation, it’s important to use tools to sort the truth from the… well the crap. This model, originally inspired by university based librarians, sets out to do just that.

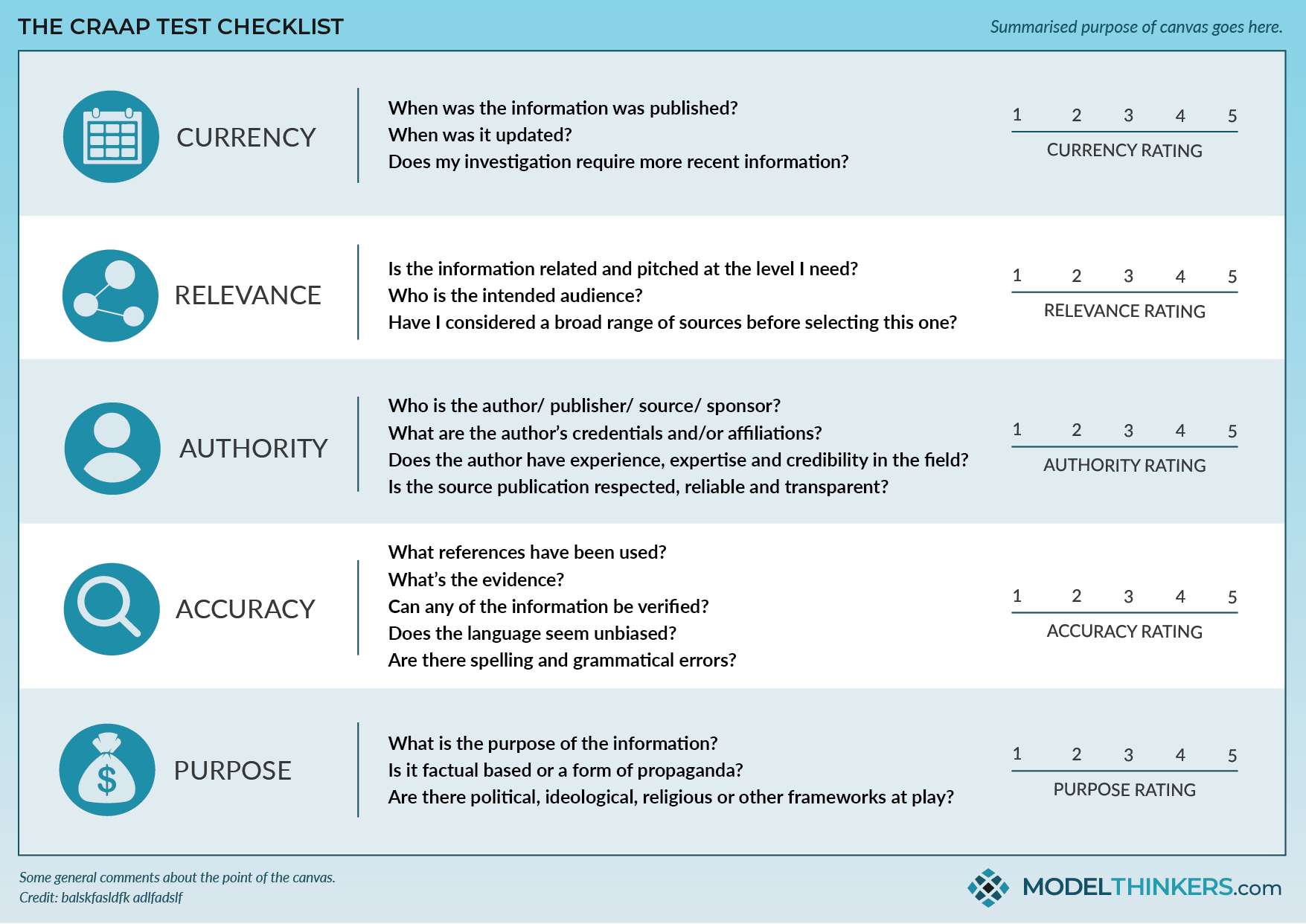

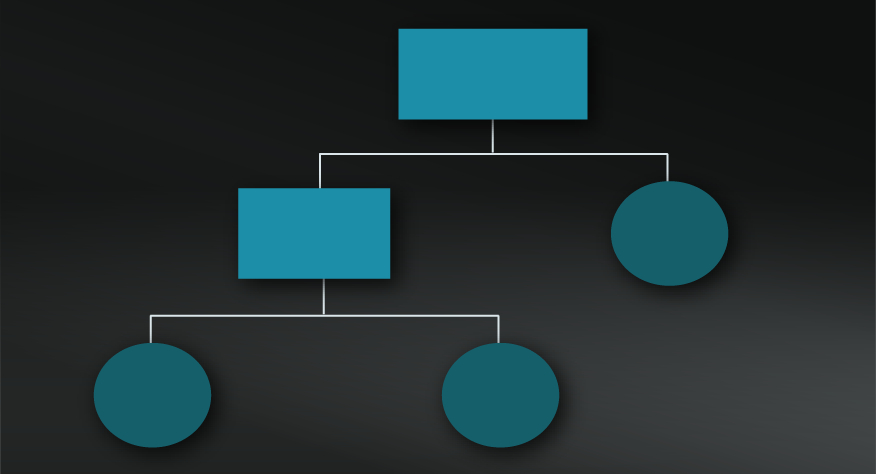

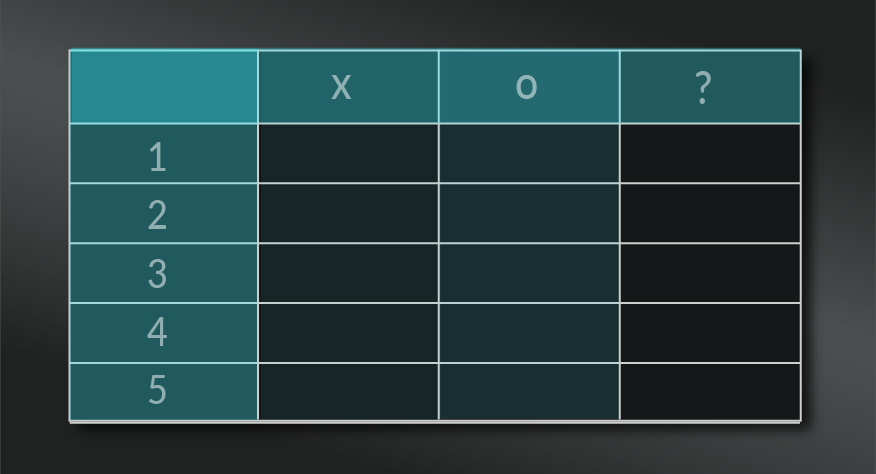

The CRAAP Test serves as a checklist to establish the reliability of the information, based on an assessment of its currency relevance, authority, accuracy and purpose.

ACADEMIC ORIGINS, BROAD APPLICATION.

Sarah Blakeslee, the originator of the CRAAP Test, explained: “Evaluation of information is one of the most important skills that we, as librarians and instructors, can teach our students.“

It is a popular tool amongst academics. students, and in particular higher education librarians to assess research sources. Unfortunately, in our world of crazy disinformation, it’s a model that has become much more relevant when trying to understand the facts behind basic world events.

USE THE CHECKLIST AND BEWARE CONFIRMATION BIAS.

See below in the tools section for a printable CRAAP Test checklist that you pin up near your computer as a timely reminder. We find this model useful but add in a layer to challenge the Confirmation Heuristic, see limitations below for more.

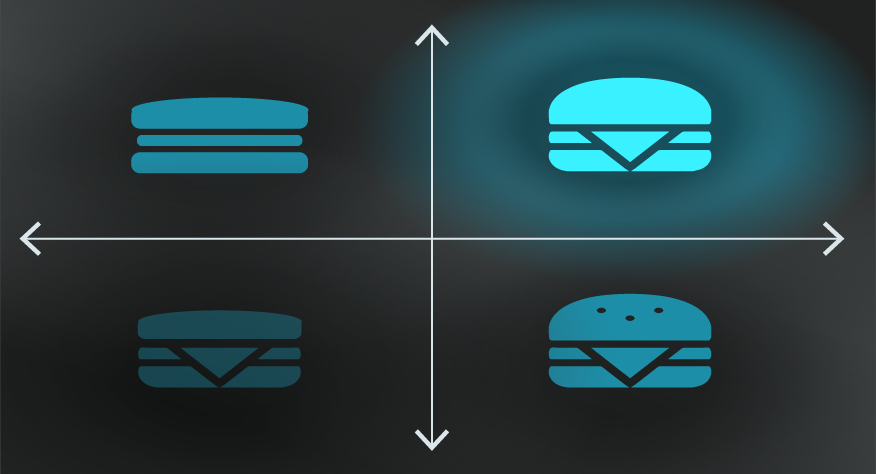

- Identify the question or topic you are exploring.

Define your area of exploration and check yourself for potential confirmation bias. Ensure that you gather a range of potential sources.

- Check your source for currency.

Ask when the information was published? Whether it’s been updated? Does your investigation require more recent information?

- Check your source for relevance.

Is the information related and pitched at the level you need? Who is the intended audience? Have you considered a broad range of sources before selecting this one?

- Check your source’s authority.

Who is the author/ publisher/ source/ sponsor? What are the author’s credentials and/or affiliations? Does the author have experience, expertise and credibility in the field? Is the source publication respected, reliable and transparent?

- Check your source for accuracy.

What references have been used? What’s the evidence? Can any of the information be verified? Does the language seem unbiased? Are there spelling and grammatical errors?

- Check your source for purpose.

What is the purpose of the information? Is to inform, persuade, entertain or sell? Is it factual based or a form of propaganda? Are there political, ideological, religious or other frameworks at play?

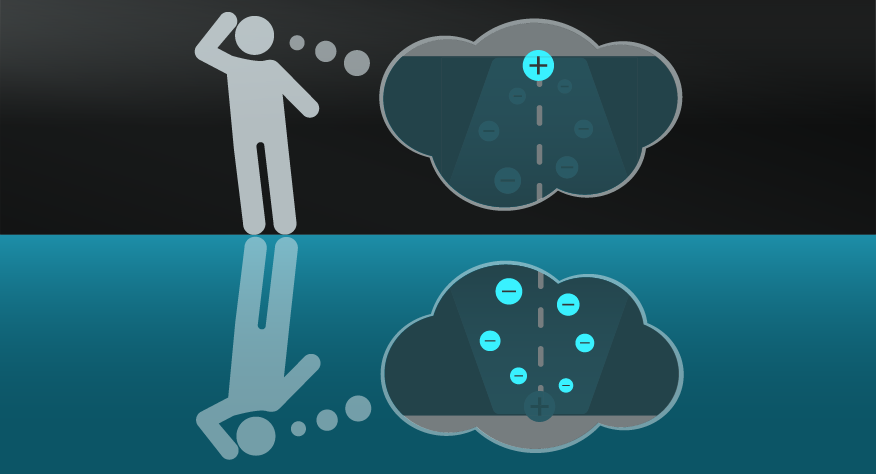

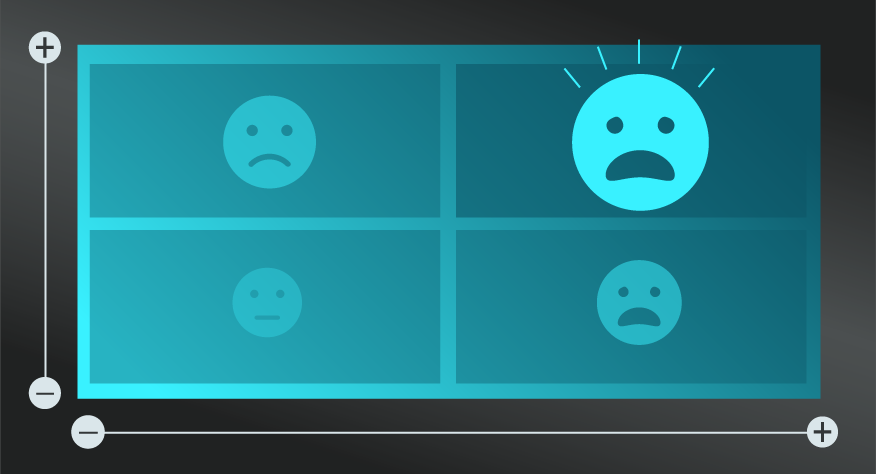

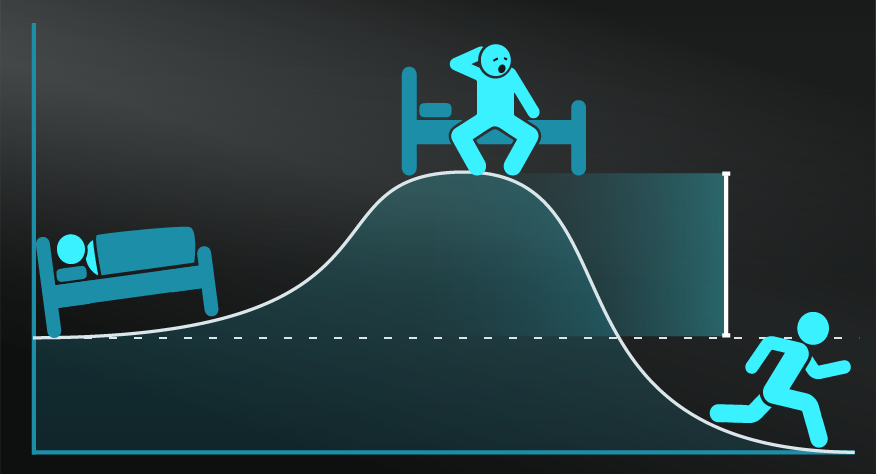

While we often use CRAAP as a loose checklist we've found one flaw in its design is the lack of consideration of the Confirmation Heuristic, or the tendency to validate information based on beliefs. The argument is that CRAAP will help prevent this but the process of CRAAP can be infected by confirmation. Our addition? We add a step to first identify my beliefs, then challenge them, often with a few thought experiments. What if someone else wrote this? What if this argued the exact opposite?

Beyond my criticism, CRAAP Test has been targeted on the basis that a simple checklist is an inadequate method to adequately assess information. In particular, this article entitled Rethinking CRAAP from Jennifer Fielding drew attention to the method’s approach of diving into a particular source and losing crucial context as a result. She explained:

“A 2017 Stanford working paper by Sam Wineburg and Sara McGrew highlights this evolution in stark relief, assessing the critical evaluation skills for web content between students, faculty, and professional fact-checkers. They found that faculty (arguably information-savvy critical thinkers) performed barely better than undergraduates in assessing the credibility of web content, primarily because they used the deep-dive type of assessment endorsed by methods like CRAAP—thoroughly examining the site itself.

“Fact-checkers, on the other hand, almost immediately began an independent verification process, a strategy the researchers dubbed “lateral reading”—opening multiple tabs, and searching for independent information on the publishing organization, funding sources, and other factors that might indicate the reliability and perspective of the site and its authors or sponsors.

“This lateral reading approach produced significantly better results for the fact-checkers—both in critical assessment and in the speed of their conclusions—than the “vertical reading” deep dive did for both the student group and the faculty group.”

Saving the tree octopus.

This worked example from the Central Michigan University applies the CRAAP test to several spoof websites including saving a tree octopus and a ‘dihydrogen monoxide research division.’

The CRAAP Test is a useful checklist when assessing information and sources and developing a skeptical mindset about sources.

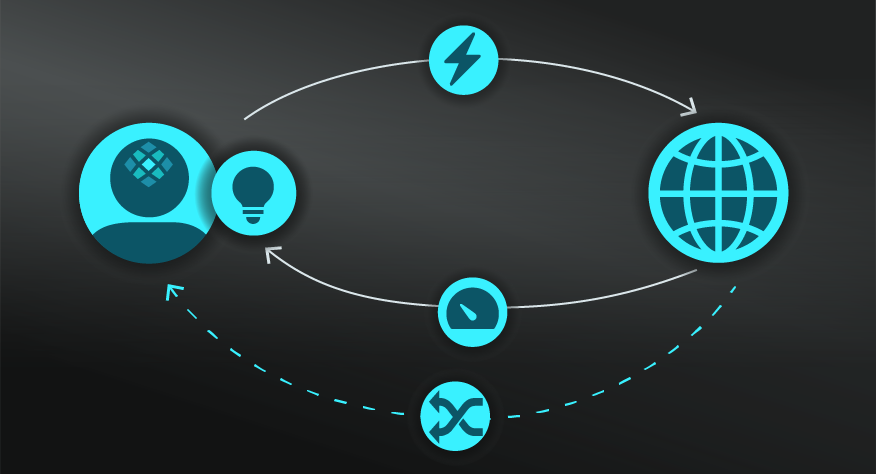

Use the following examples of connected and complementary models to weave the CRAAP Test into your broader latticework of mental models. Alternatively, discover your own connections by exploring the category list above.

Connected models:

- Thinking Fast & Slow and Confirmation Heuristic: understand your inherent bias in assessing information.

Complementary models:

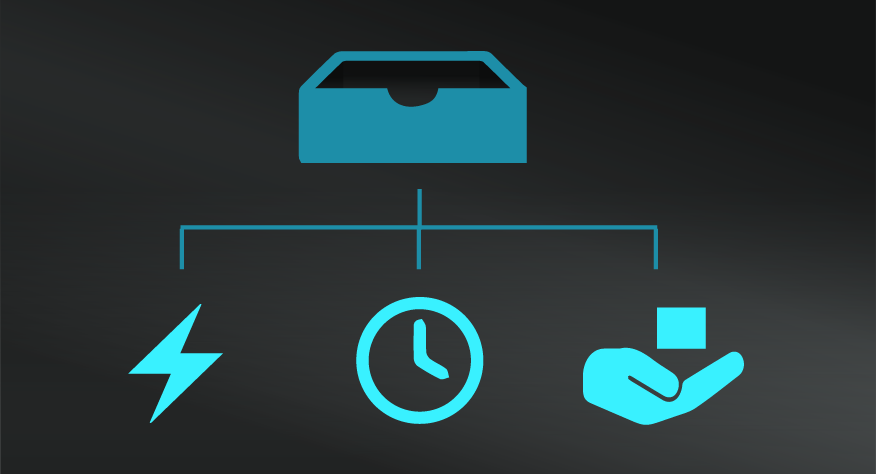

- Deep Work: providing the time for focused work and thinking.

- 5 Whys: digging beyond the face value information.

- Probabilistic Thinking: not seeing things as ‘right’ or ‘wrong’ but assigning an element of truth to different sources.

The CRAAP Test was created in 2004 by Sarah Blakeslee and her team of librarians at the California State University. See the original source here. It was further simplified by Molly Beestrum in 2007 as the CRAP model, which omitted 'accuracy'.

My Notes

My Notes

Oops, That’s Members’ Only!

Fortunately, it only costs US$5/month to Join ModelThinkers and access everything so that you can rapidly discover, learn, and apply the world’s most powerful ideas.

ModelThinkers membership at a glance:

“Yeah, we hate pop ups too. But we wanted to let you know that, with ModelThinkers, we’re making it easier for you to adapt, innovate and create value. We hope you’ll join us and the growing community of ModelThinkers today.”